Barriers

Our team uses agents to review new AI literature, ship software, and automate small language model experiments. We demand a lot from our agents and expect more from them every month. But even when using frontier models in their latest harnesses, our agents fail us. They hallucinate citations, reward hack our test suites, and make decisions that are scientifically unsound. All serious AI users have experienced these shortcomings, but the promised automation is too good to pass up. The world is charging full steam ahead deploying agents that make decisions about markets, healthcare, and even war. It should concern us that verifying the work of our agents has largely gone unsolved.

Slop in, Slop out

Agent behavior isn’t the only problem we have to contend with. It’s easier than ever before to produce garbage content at scale. How can we tell what’s true when we are flooded with noisy articles and tweets parroting each other to maximize views? Worse still, it is dead simple to run sophisticated scams and misinformation campaigns. Snap a photo of a site and generate flawless phishing emails. Snap a photo of a person and generate a video of them doing and saying whatever you want. We are trying to automate our workflows while being surrounded by an increasingly adversarial information environment.

Verified Intelligence

If we are besieged by malicious information and misbehaved agents, how do we trust AI? When we started dFusion a couple years ago, we set out to curate verified, quality information. As the landscape evolved, we became increasingly aware of the need to expand verification to AI tasks. Verified Intelligence requires a multi-faceted approach.

Fast Validation

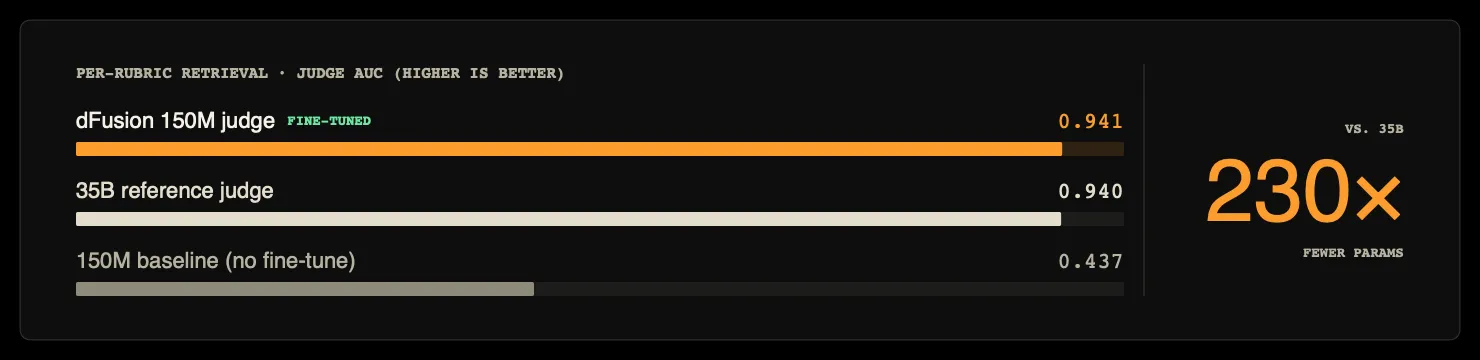

LLM Judges are used to grade agent outputs and select AI training data. These judges allow us to scale intelligent data processing; instead of matching words or format, we can do sophisticated things like grade content against rubrics. The field has matured to the point that researchers now publish benchmarks for evaluating the judges themselves — JudgeBench being a notable example — finding that even frontier models perform only marginally better than random on challenging factual and reasoning pairs. Hundreds of thousands of dFusion users have contributed to data to our curated knowledge pods, building multibillion token datasets across 75 domains like Global Markets, AI Research Papers, and Animal facts. The vast majority of submissions do not make the cut, and we’ve trained our own judges to process submissions at scale. We’ve run into many scaling and cost issues here, and it is clear that working with that much data requires models that are fast and cheap. Using Late Interaction, we’ve been able to train fast, tiny models that compete with judges hundreds of times their size.

Corroboration

Surely we should not take an LLM’s word for verified truth. We need external validation from varied sources to make informed decisions and determine what we believe to be the truth. FEVER workshops and datasets seek to address this directly. Some information verification requires multiple web searches and filtering out misinformation. By iterating using decentralized open source competition, our fact checking agents have gone from grabbing the top search results to reaching 88% accuracy against some of FEVER’s golden question sets.

The Harness

Even with confidence in our information, how do we know that agents actually performed their jobs? There are many things working against agents tackling big tasks — exploding context causes inaccuracy and confusion, errors accumulate between steps, and sub-tasks might be glossed over. We’ve been building harnesses (the app the AI runs in) that reduce errors and verify sub-tasks. Xinming Tu wrote an excellent piece that we think nails the shape harnesses need to take. The main concepts are: break down tasks, isolate tasks so agents don’t get distracted, and verify subtasks. Few of the top harnesses today do this. Leveraging our previous data validation techniques along with our own in-harness validation techniques, our agents are solving hard problems from datasets like SealQA. More to come on harness philosophy in later posts.

Looking Forward

It’s more important than ever that we nail down the technology that enables verified intelligence. The world needs agents that get their jobs done and don’t put core infrastructure and processes at risk. We are tackling the problem of verification across the agentic stack, and will be sharing more product announcements and tech deep dives soon.